|

11/9/2023 0 Comments Inpaint

The rate of producing another head is actually very low. There are some traces of edges left by outpainting but they're not very noticeable. This particular setup evenly expands canvas according to what was specified in the dimensions with the input image as the center of the canvas. Resize Mode should be set to Resize and Fill (This setting lets you outpaint) Try experimenting with both preprocessor types as they can differ greatly in the outputĬontrolMode needs to be set to 'ControlNet is more important Inpaint_only + lama is another context-aware fill preprocessor but uses lama as an additional pass to help guide the output and have the end result to become more "cleaner" Preprocessor can be inpaint_only or inpaint_only + lama Model should be the control_v11_sd15_inpaint from the official ControlNet repository (I forgot to adjust the hires steps in the screenshot I normally use 10 steps) Set the upscaler settings to what you would normally use for upscaling. Positive Prompt:ġgirl,blue_hair,purple_eyes,long_hair,blue_dress,frills,long_sleeves,(bonnet),medium_breasts,looking at viewer, blush,smile,town,country_side,outdoors,dusk,bow,hair ornament, frilled dress,european clothes,medieval,stone, castle, chimney,old,gothic,from_sideĭuplicated, disfugured, deformed, poorly drawn, low quality eyes, border, comic, lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, (worst quality, low quality:1.4),normal quality, jpeg artifacts, signature, watermark, username, blurry, artist name, pixels, censored, verybadimagenegative_v1.3, nsfw,EasyNegativeV2ĬontrolNet inpaint-only preprocessors uses a Hi-Res pass to help improve the image quality and gives it some ability to be 'context-aware.' The recommended CFG according to the ControlNet discussions is supposed to be 4 but you can play around with the value if you want. While this preprocessor supports context-aware outpainting which is generation without the usage of a positive or a negative prompt, I have personally found that it works better with some prompt included. This is the prompt that was used to generate the image below and is the same one that I will be using for outpainting. (I am also using the MultiDiffusion extension to help reduce the VRAM usage.) More specifically, I am rendering at 768x768 with a Hi-Res Fix of 2x. In this example, I will be outpainting a 1024x1536 image to 1536x1536.

You can still run it without Hi-Res fix but the quality won't be as great. To use this functionality, it is recommended use ControlNet in txt2img with Hi-Res fix enabled. The context-aware preprocessors are automatically installed with the extension so there aren't any extra files to download. You will need the A1111 webui with at least version 1.3 and ControlNet 1.1.222 with the control_v11p_sd15_inpaint.pth inpainting model installed. Overall, I will try to keep this guide as simple as possible and provide some minor findings. However, it is a tremendous step forward for the opensource community and adds a lot of potential for image editing. This particular preprocessor was designed to mimic Adobe's context-aware generative fill and has several properties that are very similar to it but isn't a 100% replica. In this guide, I will cover mostly the outpainting aspect as I haven't been able to figure out how to fully manipulate this preprocessor for inpainting. The ControlNet extension has recently included a new inpainting preprocessor that has some incredible capabilities for outpainting and subject replacement. This has resulted in outpainting discussions being uncommon and rarely posted. The older outpainting-based extensions have a better quality of life for outpainting but the underlying issue of generating multiple heads is not fixed and the process often damages the image by leaving hard edges.

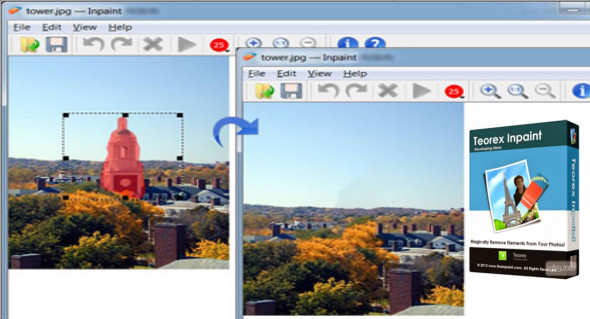

Outpainting is one of a few methods used to create a larger image by expanding the canvas of an existing image, but it has a rather notorious reputation for being very difficult to work with and which has resulted with the community to prefer one-shot generations for making widescreen displays.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed